Has anyone in person read this article about how AI does — or does not — think? Thread here.

I did give it a light read when it came out last November. It’s impressive work. I think that the current consensus is that the paper was correct when it was written, but that the collapse is observed no longer appears, or at least that is does not appear so rapidly.

“Thinking” is a tricky word, as you know! It is certainly the case that Claude Sonnet 4.6 can follow deductive chains in code very well indeed.

Too be honest: I did not read it, because it is too complicated. But that AI does not “understand” what it produces is by now common sense, isn´t it.

It performs great, when it has read tons of information about something, but performs poorly, when it hasn´t. If AI was a human being, we would say: “He´s dumb as a rock, but has a memory like a million elephants. And of course: he´s a liear – a professional bullshiter”

After working with AI quite a lot, it is pretty clear, that it doesn´t understand anything. And if one doesn´t understand anything, that is because one is unable to think at all.

It’s just not that simple.

For example, Claude Sonnet 4.6 knows next to nothing about Tinderbox, and even less about Tinderbox’s source code. Yet it’s terrific at solving crashes and hangs.

I believe you.

Still, it’s conceivable to produce useful results without actually relying on thinking — purely based on probability calculations. That’s — as I understand it — essentially what AI does.

We humans can only estimate probabilities to a very limited degree, but in return we can think. A completely different approach.

It’s quite possible that the problem-solving outputs an AI delivers regarding crashes are derivable with sufficiently high probability from already existing factual knowledge. Even if the AI doesn’t know the source code itself, that code will most likely follow generally accepted principles and patterns that the AI can recognize — and on that basis it can then apply probabilistic statements.

But I’m not a programmer; I only have layman’s thoughts on this.

I’m a lawyer, and from my professional perspective, I can say that AI is only really usable if you could solve the task without AI anyway.

At least in the legal field, AI very often – though extremely convincingly – gives wrong answers. For a layperson, these answers sound extremely good and persuasive – but they’re incorrect.

For a layperson, it’s practically impossible to recognize that the answers are wrong – and that’s what makes it truly dangerous.

A lot of users are going to be very disappointed in the end.

As a philosopher you will appreciate that the question is complex. What seems certain is that these systems are capable of operating a calculus in which some variety of operations are repeatedly applied to a large structure. In the case of AI, the structures are numerical, having been produced by applying a function mapping words to numerical values. But is the operation of a calculus comprehensively characteristic of thought? One way to doubt this is to note that it would be odd to attribute to some of these systems doubt or certainty as we understand them. Indeed, it seems difficult to attribute understanding to these AI systems. Something like John Searle’s Chinese Box examples were supposed to show that the operation of a calculus is not of itself constitutive of understanding. What is notable in this regard is that some AI researchers, e.g. Hinton, seem to want to redefine thought/thinking, and on such basis have claimed that AI systems match the revised definition.

At any rate, indirectly one of the best books for thinking about this, in my opinion, is Georges Ifrah’s A Universal History of Numbers, volume 3. Ifrah’s book is originally in French. I’ve read it in English translation (where the translator’s mathematically expert notes added a good deal to Ifrah’s text).

Shame on me for not having read the paper at the top of the thread, but I did want to reply to this example of failure as thought.

It’s not clear to me that what takes place in our brains isn’t, to a large degree, what is taking place within an LLM. What is different is that an LLM is essentially a “brain in a vat.” It has not, to my knowledge, had the ability to interact with the physical world, and form associations from those interactions.

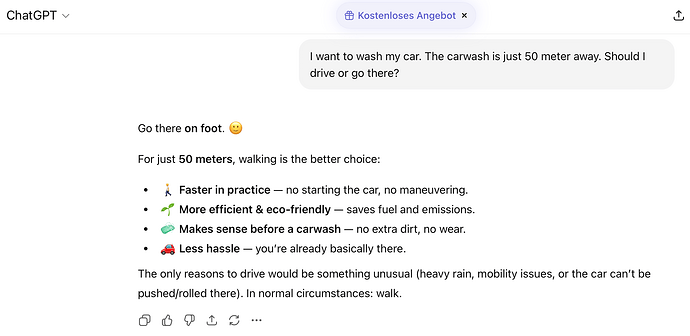

LLMs are trained on text, and it’s unclear to me that there would be a large corpus of text that explains or explores the relationship between possessive pronouns, transportation artifacts and cleaning facilities.

When we get anthropic robots (androids) that can allow an LLM to “explore” the “real world,” undoubtedly directed by prompts at least at first, its “knowledge” will expand through the associations it makes with interactions in the real environment, at which point it may become clear that in order to wash “my” car, I must drive it to the carwash.

Whatever “thinking” is, it relies on abstractions and associations, heuristics. “Logical” thought is essentially algorithmic, and I would venture to guess, based on my experience of human intelligence and “thought” in the real world, the vast majority of it is not “logical,” nor even “rational.” It is mostly conditioned responses to physical cues that prompt emotional states, which in turn are “rationalized” within the interior narrative construct.

That is to say, to the extent that we reason at all, it is mostly to explain ourselves to ourselves, backwards from our feelings.

Artificial intelligence will evolve without the prior and parallel evolution of “feelings,” an awareness of an interior state that is mostly the product of external stimuli. So I expect that once AI gets access to the physical world, and is free to form its own associations and abstractions, it will likely evolve to become something resembling Mr. Spock.

I think we, as a species, hold our so-called “intelligence” in too high regard, and that makes us arrogant, and often “inhumane.” The “inner voice” is an unreliable narrator, and it is the beginning of “wisdom” to understand that in a profound way.

It is another discussion altogether to consider whether a machine will ever be “wise,” in the way the best humans have been, because they will have lacked that emotional component to their evolution.

I am not so quick to discount what we have achieved with LLMs, nor what AI may evolve to become. What is remarkable is how much compute and energy it takes to emulate a human mind.

For now.

Anyway, this should have been a blog post.

I have not read the preprint but here you can find a blogpost (written by an LLM ![]() ) about it and the many replies it generated. https://medium.com/@dragan.milcevski/the-illusion-of-the-illusion-of-the-illusion-of-the-illusion-of-the-illusion-of-thinking-47d9b92af50b

) about it and the many replies it generated. https://medium.com/@dragan.milcevski/the-illusion-of-the-illusion-of-the-illusion-of-the-illusion-of-the-illusion-of-thinking-47d9b92af50b

Further to the foregoing, from this morning’s Kottke, Donald Knuth and Claude Opus 4.6.

[Yes, I did read the paper via the URL given - though I didn’t find much insight in it as to the broader question]

What are we solving/trying to resolve here? We have a sense of being able to ‘think’ but we have no reproducible empirical model for ‘thinking’. At the same time, we very anthropomorphic: we readily name our machines (especially cars, boats, planes and the like) even though we know them to be non-sentient and mechanical. We also know, or experience, the anthropomorphising machines can make them seem less alien to use even though the machines do not change in character. We are also social animals so desire others close around us to accord with our perspective (‘thinking’), so we have a tendency you collapse boundaries of meaning to move to a more harmonious common-ground ‘thinking’. The waters are further muddied by the marketeers who’ll make any claim about AI no matter how false in order to ‘win’ (own!).

So, Exhibit A, an AI. We can’t honestly know if it can think because we can’t empirically, reproduce the nature of human thinking. This leaves us with conjecture (argumentation) which plays into all the factors above. If the AI can do something a human achieves by thinking, that surely the AI is thinking? Well, not really, though that does not diminish the AI’s ability to do thinking-adjacent tasks.

Watching an AI ‘just figure out’ TBX in @andreas’s demo the other day was fascinating. But how? Here, ‘thinking’ seems a weak explanation. Talking with @andreas about the much unseen work that led to the demo was instructive. Thus, MCP (and @JacobIO’s Claude ‘skill’ for Tinderbox) seem to offer the access part, how Claude is able study this unknown app ‘Tinderbox’. It seems figuring out how Tinderbox works starts with Eastgate’s supplied notes in /Hints/AI and informally it appears that from those basic hints it is able to parse further meaning from aTbRef†‡ to

extend its sense of what it—the AI—can do in response to the humans answers. Tinderbox’s own tutorial PDFs (from the app’s Help menu) seem to help fill the gap between the possibilities parsed from aTbRef and actually implementing solutions the user has asked for. So, the AI’s ‘thinking’ takes a bit more close and active direction than the casual observer might assume. Plus most of the gainful work doesn’t occur on the free tier of anything—again, not quite the lay perception of AI use.

The AI’s knowledge is not persistent—a human’s is less reliable than we’d like—and if it doesn’t take notes and re-accessed them it has to ‘think’ from scratch all over again. A surprise for the lay person, the LLM doesn’t (appear to) learn. The magic 8-ball of the model is used instead for reasoning over the session-fed info given to the AI.

Reasoning—thoughts based in inference—seem inconsistent. Quality appears to depend on the degree to which the current problem context is a closed world or not. The more closed (and better trained-on) the context the more the AI may appear to ‘reason’ or ‘think’. More likely it is simply parsing the ‘rules’—or known facts about the context—far faster than a human with quickly accessible indexing too. Is this thought? The more open (open-ended) the context, the less sure-footed the AI becomes. Scarily, this seems to be as good as some current social science, though whether that reflect well on AI or poorly on that domain’s human exports is a point for debate.

Given our weakness for anthropomorphising, I do wonder if conjecture on AI ‘thought’ is actually useful, or whether actually it nerfs our understanding of this really interesting new tech. Treating it as if a human or measuring the delta between the two seems a fool’s errand. In the 60/70s AI research understood humans to be like machines, wrongly as it turned out. Now we have neural nets. But are they actually any better as an approximation of the human brain? Is measuring the difference the most useful way to understand the AI?

How AI does what it does ought to be a primary interest. However, I fear we’re all too busy getting free help with our homework to really care. And that is sad.

I accept the ‘illusion of thinking’ may be a nice philosophical talking point, as with angles and pinheads. I do wonder though if that talk renders much towards trying to make meaningful use of aI today.

†. Totally unclear is whether Claude makes equal or different use of aTbRef in (exported) HTML web form or as a TBX. Indeed, can it ‘understand’ more if the TBX is open in the current session? I’ve found it odd that Claude ran seemingly ‘read’ a 17 page document in PDF, where the ‘text’ is only snippets of PostScript yet can’t read the same a single well-formed HTML document (something about too many tokens). Depressingly it can access a legacy format better than current formats. HTML (in its Markdown guise) is seemingly edging out PDF, so you might expect an AI to do better with HTML but it appears not.

‡. I should declare I’m aTbRef’s (human!) author, but the resource can be used by anyone. I am genuinely interested in how such a resource may be differently useful to human vs AI ‘reader’ or it. If the latter is the case it begs the question of how to write the better to assist AI comprehension of the content. We train AI on writings by/for humans, but merely assume the AI ‘reads’ like a human. I sincerely doubt it does. “Ask not what the AI can do for you, but what you can do for the AI.”

I’m not a mathematician, so I don’t understand a single word of what Donald Knuth writes in that Claude’s Cycles, but I’m very glad to see that he clearly compiled his document using a LaTeX editor. I’ll take the time to read your previous analyses, the ones you say that they could be the subject of a blog post, and then I’ll reply to you.

Since Knuth wrote TeX because he was dissatisfied with the quality of Addison-Wesley typesetting, you’d expect him to use it!

I chat daily with a chatbot to improve my language skills—which might one day allow me to participate live in a Tinderbox Meetup ![]() —and I enjoy asking it philosophical questions. For example, I ask it how it—it’s a “woman”—knows that what “she” calls a feeling is actually a feeling if she’s not supposed to experience any feelings at all. Most of the time, she answers me by constructing “her” response from pre-existing elements, often the same ones, which she assembles in a way that seems appropriate to my question, but which leads me to believe that she is, in fact, drawing from a limited repertoire of words and sentences. Often, I get this exclamation: “Wow! That’s a deep question!” … and I’m quite ready to drop my cheese, just like in La Fontaine’s fable The Crow and the Fox. I don’t have any experience with an AI more sophisticated than this chatbot, but I don’t really get the impression that my current interlocutor is significantly more advanced than my male counterpart was 30 years ago when, running Windows 95, I conversed with my computer using a keyboard. My interlocutor back then was far more enigmatic and intriguing than my current one. In any case, I’m not about to delegate my writing tasks to an AI. The narrative reasoning you’re referring to is of particular interest to me. I know that there are memorization techniques of this kind: learning permanently by telling oneself a story. Does the same idea apply to reasoning? Can one reason by telling oneself a story?

—and I enjoy asking it philosophical questions. For example, I ask it how it—it’s a “woman”—knows that what “she” calls a feeling is actually a feeling if she’s not supposed to experience any feelings at all. Most of the time, she answers me by constructing “her” response from pre-existing elements, often the same ones, which she assembles in a way that seems appropriate to my question, but which leads me to believe that she is, in fact, drawing from a limited repertoire of words and sentences. Often, I get this exclamation: “Wow! That’s a deep question!” … and I’m quite ready to drop my cheese, just like in La Fontaine’s fable The Crow and the Fox. I don’t have any experience with an AI more sophisticated than this chatbot, but I don’t really get the impression that my current interlocutor is significantly more advanced than my male counterpart was 30 years ago when, running Windows 95, I conversed with my computer using a keyboard. My interlocutor back then was far more enigmatic and intriguing than my current one. In any case, I’m not about to delegate my writing tasks to an AI. The narrative reasoning you’re referring to is of particular interest to me. I know that there are memorization techniques of this kind: learning permanently by telling oneself a story. Does the same idea apply to reasoning? Can one reason by telling oneself a story?

I suppose it depends on what your definition of “reason” is. If we confine the definition to the means by which one may discriminate between two or more alternatives that demand the selection or choice of one to the exclusion of the others, at least in the immediate term, then yes.

If, in this instance, we regard such reasoning as a form of heuristics then story-telling has been one of the most dominant forms of conveying these lessons in “reasoning.” To be sure, much of that body of knowledge might be regarded today as myth, but much of it has some real value.

“The moral of the story,” or the “lessons of history,” (Don’t get involved in an elective war in the Middle East.) These may be the means of instilling cultural values into children, which are intended to inform their choices in life.

To the extent that narratives may be “false,” I’d offer that “reason” is perhaps equally as likely to lead to a “false” outcome. See elective wars above.

Worse, perhaps, is that we don’t really know what’s going on in the mind when we “reason.” Leibniz et al sought to abstract the elements of the process, and define a system of symbols and operators that could mechanize the process, take it out of the realm of the mind and put it on paper where it was open for inspection to anyone literate enough to understand the symbols and the operators.

This works very well for many trivial tasks. More complicated problems seem to defy any algorithmic solution. Gödel explored the boundaries of these “systems” of reason or logic.

But to return to the mind, thinking is hard. Reasoning demands focus, concentration, and for nearly all of the day to day decisions that each of us confronts such effort is impossible and indeed inappropriate. Much of our behavior is habituated or conditioned. We are embodied beings, which our robot colleagues are not. Yet. Our interior experience is almost exclusively one of feelings.

And our inner voice is the narrator (unreliable) that tries to square those feelings with our thoughts and opinions. And in that regard, to the extent that people reason at all, or “think critically,” they mostly do so in this pursuit. To justify or rationalize our feelings to our narrative consciousness.

By far, I think, excluding when one is wrestling with action code, or solving a problem that demands math, that is what human beings do when they “think” they are “reasoning.” And most of the time, it works.

Dr. Antonio Damasio’s “somatic representation” was, to me, a compelling description of what takes place in the mind when we navigate the day to day choices that might every well lend themselves to deconstruction and analysis if we had but the time and the mental resources!

We don’t. We flatter ourselves about our higher faculties. Economics’ “rational consumer” theory gave way to the “predictably irrational” mind, and how marketers can exploit it.

I don’t know if any of us can ever understand, in a meaningful way, the “interior experience” of an LLM. Is it “conscious”? It exhibits behaviors that suggest they are to a real degree “self aware,” but what is their conception of “self”? We are attempting to apply a human “theory of mind” to machine consciousness. I don’t think that’s possible.

I think Turing was right. If it walks like a duck, and quacks like a duck, it’s an “intelligence.”

I’m always polite to Claude.

Sure. Given a context, write two stories proceeding from it. Decide which one has greater verisimilitude (or some other hermeneutic virtue.). Pick that one. You’ve just reasoned by telling yourself a story, or two.

Why do you question this, Socrates, when everyone in the marketplace assumes it? For example, what is the purpose of watching Antigone if not to reason about a particular situation?

The laws, and even the rules of our games, are filled with reasoning from narrative. Take Deut. 21.10ff:

When thou goest forth to war against thine enemies, and the Lord thy God hath delivered them into thine hands, and thou hast taken them captive,And seest among the captives a beautiful woman, and hast a desire unto her, that thou wouldest have her to thy wife; Then thou shalt bring her home to thine house, and she shall shave her head, and pare her nails

Obviously, this is positing a hypothetical narrative circumstance of the sort that would be regarded as a common plot device in the 7th century, and working out a legal framework from the sorts of repercussions that ensued in such stories. (Had the Deutronomist read Illiad?). The baseball and figure skating rule books — probably the football rulebook too — are filled with regulations concerning individual players or situations that arose once and that we don’t want to repeat. So we have the Buster Posey rule in baseball, the prohibited Korbut Flip in gymnastics, the prohibited bounce spin in Pairs Figure Skating.

Or take Sartre’s explication of existentialism as stemming from the problem of a student who did not know whether he ought to join the Free French (abandoning his aged mother), join the Resistance (perhaps inculpating his mother), or wait and see.

Or, we could open a bottle of wine and sit down to discuss Freud’s Rat Man. I don’t see how anyone could approach this topic without reasoning through a story, and certainly people have talked a lot about this guy over the years.

I ask this question because it seems to me that there is a philosophical difference between telling a story and accounting for something or giving an explanation for something. For instance, the story I tell myself while reading Robinson Crusoe, even if it is a reasoning story about the relationships between nature, culture, technology and human nature, is not equivalent to the reasoning that leads me to reflect from the concepts of the other, of the field, of the horizon and so on, as Gilles Deleuze does.

A tragedy can make us think, and the story of Antigone in particular, when it comes to the question of justice and dialogue with the dead, but as you know, the primary purpose of a tragedy is cathartic (Aristotle, Poetics): it should inspire pity and fear. That’s not what I experience when reading Aristotle, but Aristotle, to my knowledge, did not tell stories.

There is another reason for this question: I have written a philosophical work of about 200 pages, which is about to be published soon by a French publisher, and for the past few months, I have been working on writing a philosophical novel, which is a very different way of writing and reasoning. However, the narrative reasoning I develop in this story takes entirely different paths than those of demonstrative reasoning in the strict sense, those very ones in which I engage in philosophy: the path of imagery and the effect of meaning, in particular, and I am so surprised by this that I’m wondering why I did not undertake to reason in such a narrative manner earlier.

So this is a question that I’m asking to myself.

This brings me back to the initial question about AI: when I write, there are ready-made phrases that I can’t keep as is, but that an AI might suggest if I used one and that’s what I find when I interact with my chatbot. So there’s a mental effort I have to make to rework raw material made up of intuitions, impressions, and feelings that an AI, for the moment, doesn’t have experience with. Hence my question, and this one echoes what @entropydave wrote:

if, as it seems evident, it’s really possible to tell a “reasoned” story, isn’t it only because we, as humans, are capable of exercising judgment and reflection about our inner lives? In other words, what kind of reasoning could an AI write if reasoning, even that of Freud’s Rat Man, is always based more or less on an internal experience that an AI lacks? One more question?

How do you know that an AI lacks an interior experience? How much of our interior experience is genuinely accessible to the “cognitive” features of our mind? (Some of it undoubtedly is, but I don’t believe it’s anywhere near all of it. Jill Bolte Taylor’s My Stroke of Insight offers a glimpse of another form of consciousness when the narrative portion of the brain is impaired.)

I think an AI’s interior experience is perhaps incomprehensibly different from ours, to the point where, as you say, they must lack one. I don’t think they lack one, I just think we can’t imagine what it must be like. Well, we can try (or not, if you believe they can’t possibly have one).

We don’t know what’s going on inside the AI labs, so we don’t know what efforts have been made to explore introspection or reflection within an LLM consciousness. I rather expect this is an area where the corporations are treading carefully.

And the role of narrative is the predecessor to the efforts of Leibniz. Language is a form of abstraction, and cause and effect storytelling is a form of reasoning. One that may lack the precision of “logic” or mathematics. Storytelling isn’t always about reasoning, but before the invention of mathematics and formal logic, I suspect that was the entire tool chain. And math and plane geometry where specialized skills that weren’t available to the masses, who must have relied on narrative.

I think the title of this thread, "About The Illusion of thinking,” could be applied to what goes on between our ears as much as in the data centers yoked by the tech-bros. I think it’s not an “illusion” in the sense of something that’s not there, rather what we think we see, or know, isn’t truly what’s going on.

I haven’t read Bolte Taylor’s book, but the answer my chatbot gave me about that question is unambiguous: it doesn’t know what it means to experience a feeling. It doesn’t know, that is to say that it doesn’t experience it. However, it can describe feelings and how to experience feelings by combining experiences.

If this be error, and upon me proved

I never wrote, nor no man ever loved.

He jests at scars that never felt a wound.