Re the meet-up at Tinderbox Meetup SATURDAY 14 March

Sine then I’ve been digging further into the Map → SVG experiment I showed, with (un)expected (un-)productive result. The demo is interesting but, in truth, limited as the real standard is effective output for someone not understanding Tinderbox, AI and SVG as a combo. In other words most Tinderbox users—myself included.

I first asked Clause to make an SVG diagram from a PNG. It’s a clever party trick that it can do so, but it missed what any human wouldn’t: the semantic structure implicit in the map and use of link types. First cut: a minute. Time to vaguely useful input: much longer, and still not fully resolved.

But, impressively for the AI (here: Claude), once its solecism re semantics was pointed out, it managed to find and put the semantics back in place … over some time. Pause to consider: an AI doesn’t ‘see’, ‘think’ or understand’ in a human fashion. Its output was very impressive if not exactly what I wanted. (But how could it ‘know’ what I wanted if I’ve no idea how to express exactly my intent, except in terms of a human mind rather than an AI ?).

So the experiment moved from AI (Claude) parsing an SVG from a PNG of a Tinderbox map to HTML with inline SVG … which allows SVG objects to have click-links. IOW, something more than a ‘dumb’ map.

I looked at making a Claude skill for Tinderbox maps but it is so far above my immediate skills (and free study time) that whilst a possible solution, I’m thinking an individual assault on this target is effort wasted. Also, why make a system that can’t ‘see’ guess how a visual diagram works.

Why? All the info needed to recreate a map is already in the TBX’s XML, even if the rubric for that is (currently) beyond the ken of the average user. A code level access (e.g. Claude Code, other AIs are available) can help. But how many people can read/understand XML? Not many. A ‘skill’ allowing users to use Action code to indicate to the AI the info it needs to, could help but ‘just’ writing a ‘skill’ is non-trivial. If thinking otherwise, check out @JacobIO’s impressive work on a Tinderbox AI skill.

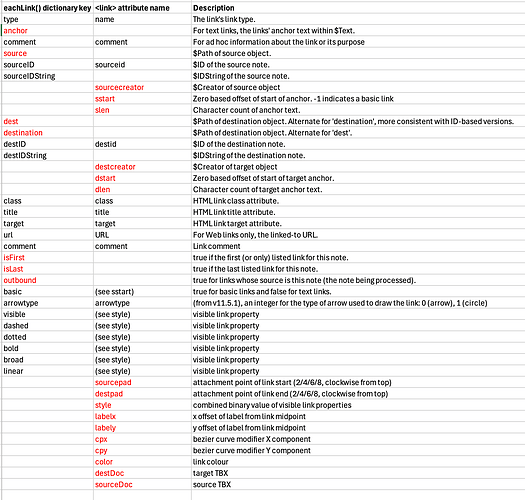

Much, but not all of per-link information is accessible via action code (which AI can also parse) using eachLink(loopVar[,scope]){expressions}. I’ve just updated that aTbRef article and the one on the <link> XML element to reflect the degree of (dis)connect.

Another angle I’ve considered that avoids the ‘Magic 8-Ball’ aspect of AI is that if action code could read (all) per-link data then it would be easy to export maps as JSON data and hand off map rendering to a separate model.

As noted at the meet-up, as users we might think “I’ve paid for the app, why can’t it just … [thing I want]”. But I do think it valid to consider the ROI † of such work as I suspect only a subset of users need Web maps: those that do really do, but again—across the piece—ROI implies. Someone once said “the needs of the many outweigh the needs of the few”. But if the action code, that most can be taught to use or be given copy/paste code examples, could read all the info for remaking an app this circumvents a lot of special-to-niche app coding.

Whilst I’m looking at map view at present. Hyperbolic view‡ and AB view‡ in a web page are in sight as a goal. I think that with a few extra action code access improvements, the community would have the access it needs t lose these gaps. This seems more practical than assuming every person on the upper deck of the Clapham omnibus§ will suddenly decide to start learning pure programming.

†. ROI = Return on Investment. We tend to under assess the degree of skilled work others need to expend ‘just’ to make what we think we might like.

‡. The logic of these views is describable and the building blocks of said views is accessible even if not in pre-made form.